Inspectors Are Finally Asking The Right Questions About AI

Ofsted's new research areas and the ISI's inspection framework both signal a massive shift in how technology is evaluated. The focus has moved from hardware and filtering logs to the psychological, pedagogical, and ethical impact of AI on pupil wellbeing, resilience, and cognitive development.

The 60-second Briefing

- Ofsted has published its new areas of research interest for 2026, focusing explicitly on how AI influences cognitive and socio-emotional development.

- The Independent Schools Inspectorate (ISI) uses Framework 23 to ask the exact same questions through the lens of pupil wellbeing and leadership accountability.

- Inspectors are moving past basic infrastructure checks to interrogate whether technology helps or harms knowledge retention and analytical thinking.

- Both frameworks place a heavy emphasis on ethical considerations like algorithmic bias, synthetic media, and data privacy within safeguarding protocols.

- IT Directors and Senior Leadership Teams must use these frameworks to evaluate their digital strategies long before an inspector walks through the door.

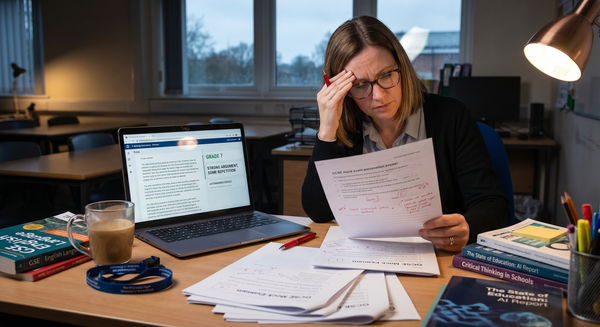

For a long time, the conversation between IT professionals and educational inspectors has felt entirely disconnected from the reality of how young people actually use computers. When it came to technology, inspections often leaned heavily towards infrastructure and safeguarding compliance. Inspectors wanted to see the web filtering logs, review the acceptable use policies, check the firewall configurations, and ensure that basic online safety protocols were in place. As long as the web filter was humming and the AUP was nicely laminated, everyone breathed a sigh of relief. We were essentially trying to ward off digital disaster with the power of glossy paper and a strongly worded PDF. These things are absolutely necessary, but they only scratch the surface of what technology is actually doing to the minds in our classrooms. We were being graded on the locks on the doors, not on what was happening inside the building.

That dynamic has just shifted significantly across both the state and independent sectors. On April 10, Ofsted published an updated document detailing its areas of research interest. A major section of this document is dedicated entirely to artificial intelligence, online harms, and digital literacy. Reading through the specific questions Ofsted is now asking reveals a profound change in perspective. They are no longer just looking at the hardware provision; they are actively interrogating the psychological and pedagogical impact of our digital strategies.

While state schools are digesting Ofsted’s new AI research framework, independent schools cannot afford to look the other way, assuming this is solely a maintained sector issue. The Independent Schools Inspectorate (ISI) evaluates every single aspect of school life through the lens of its current Inspection Framework, often referred to as Framework 23. This framework places the holistic evaluation of pupil wellbeing and mental health at the very centre of an inspection. When an ISI inspector walks through your door, they are using that exact framework to ask the same fundamental questions Ofsted is now formalising. They want to know exactly how your digital provision impacts the emotional and educational development of the children in your care.

One of the central questions Ofsted poses in its new document is: "How does using AI and other technology influence cognitive and socioemotional development? This may include problem-solving, critical thinking, knowledge retention, resilience, and forming and maintaining relationships."

This is the exact conversation we need to be having in every staff room in the country. It entirely validates the argument I made in The Illusion of Perfection. When artificial intelligence can generate a perfectly formatted, highly accurate essay in three seconds, the value of education has to move away from simply producing a finished output. We have to double down on teaching resilience, analytical thinking, and the messy, frustrating process of working through a difficult problem.

If our technology strategy simply makes life easier for pupils by removing all cognitive friction, we are actively damaging their socioemotional development. Consider the process of photography. When I take a picture, I want to frame the shot intentionally, adjust the light, and understand the depth of field. The effort is what produces a meaningful result. If a pupil uses a chatbot to bypass the frustration of a blank page, they never learn how to initiate a complex task. They are letting the machine frame the shot for them. ISI inspectors are looking for evidence that leaders actively promote wellbeing. A digital strategy that erodes a pupil's resilience and attention span is a direct failure of that standard. Both Ofsted and the ISI are now explicitly looking for evidence of this psychological impact.

The Ofsted framework also asks schools to consider whether the use of AI widens or narrows gaps between advantaged and disadvantaged learners. In the independent sector, it is remarkably easy to assume that because all our pupils arrive with expensive laptops, digital poverty is not our problem. But simply handing a pupil a piece of glass and aluminium does not magically bestow them with analytical thinking skills; it just means they can misunderstand the assignment in spectacular 4K resolution! Access to the hardware is only one small part of the equation. We have to consider whether our deployment of technology creates a divide between those who know how to interrogate and manipulate an AI system and those who simply consume its first answer passively.

If we just hand out generic chatbot access without teaching the underlying mechanics of prompt engineering and data evaluation, we are failing to prepare them. We are creating a divide in cognitive access, even if the physical access is equal. Inspectors will want to see how we are teaching pupils to command the machine, rather than just letting the machine guide the pupil. Furthermore, Framework 23 places a massive emphasis on pupil voice. If inspectors ask pupils how technology makes them feel, and the answer is "anxious" or "distracted", the school has an immediate, documented problem.

Both inspectorates are focusing heavily on the ethical risks inherent in this new landscape. Ofsted is asking: "What risks and ethical considerations arise from AI and technology use... these may include bias, stereotyping, data privacy, intellectual property rights and safeguarding." Similarly, Section 3 of the ISI framework specifically evaluates how well schools protect pupils from harm and neglect, and this now heavily features digital threats.

The safeguarding landscape has moved far beyond simply blocking inappropriate URLs on the school Wi-Fi. We are dealing with synthetic media, algorithmic bias, and highly targeted digital harassment. We are seeing real-world examples of pupils using AI image generators to bully their peers. IT departments cannot sit in an office and rely on technical filters to solve these human problems. We have to work directly with pastoral staff to ensure pupils have the digital literacy required to navigate a manipulated online environment safely.

If an ISI inspector asks your pastoral lead how the school is protecting pupils from the psychological distress of synthetic media bullying, "we block the site" is no longer an acceptable answer. They expect to see a proactive, educational approach to media literacy. They expect to see that teachers understand the technology well enough to explain it to a teenager.

Perhaps the most important overlap between Ofsted's new focus and the ISI framework is the matter of accountability. The ISI places the burden of proof squarely on the shoulders of leadership and governance. Just like the DfE standard updates I discussed in my recent post The End of the Black Box, inspectors expect the Senior Leadership Team and the governing board to truly understand the risks and benefits of the school's technology. It is not enough for the IT Director to know how the network operates; the Headteacher, the Bursar, and the governors have to be able to explain how the digital strategy benefits the pupils' education and social development.

This new scrutiny is an absolute gift to IT Directors who have been trying to force a more mature, strategic conversation about technology within their schools. I strongly suggest you print the Ofsted document and the relevant sections of the ISI framework and take them to your next Senior Leadership Team meeting. Work with your academic deputies and pastoral leads to help articulate exactly how your current technology suite improves pupil resilience and knowledge retention and how your RSHE curriculum addresses algorithmic bias.

We cannot afford to wait for an inspection to find out that our expensive digital platforms are actually eroding analytical thinking skills. We need to use these inspectorate questions as an internal audit tool today. If we cannot confidently answer how our technology strategy supports cognitive development, mitigates ethical harm, and actively promotes pupil wellbeing, we need to completely rethink what we are doing. We can no longer operate as a 'black box' IT department because the inspectors are finally ready to look inside.

See you in the digital staffroom.