The Metacognitive Trap: Why AI Access Isn't Enough

The OECD warns generic AI risks causing "metacognitive laziness" in pupils. Following our look at intentional tech, we must turn this into a procurement rule: if an AI tool removes the cognitive friction required for learning, IT must refuse to buy it. Access is a marketing trick, not a strategy.

The 60-second Briefing

- The House of Commons is investigating AI and the future workforce, demanding analytical thinkers, not passive consumers.

- The OECD Digital Education Outlook 2026 explicitly warns that general-purpose AI causes "metacognitive laziness" in young people.

- Research shows AI boosts short-term task performance, but those gains often vanish entirely under exam conditions when the tool is removed.

- Building on recent discussions about intentional tech, schools must establish a hard procurement rule against generic chatbots.

- IT departments must reject software that simply does the easy work for pupils, insisting instead on intelligent tutoring systems that introduce cognitive friction.

I wrote about cameras in my last post on inspections, but I want to explore that theme a bit longer here, because the auto-versus-manual distinction is the heart of what the OECD has just labelled "metacognitive laziness".

When I am out trying to frame a shot, I have to actively consider the light, the subject, the depth of field, the ISO, and the framing. I have to understand how all those elements interact to create the final image. If I just set the camera to auto, point it in the general direction of something interesting, and snap away, I might end up with a perfectly acceptable, in-focus picture. But I will have learned absolutely nothing about the mechanics of photography. The camera did all the thinking for me. If someone then hands me a fully manual camera and asks me to replicate the shot, I will fail.

Some of us are doing the same thing with artificial intelligence in the classroom, and we are setting our pupils up for a massive fall.

The House of Commons Business and Trade Committee launched an inquiry recently into Artificial Intelligence, business and the future of the workforce. Predictably, employers are demanding a future workforce that knows how to manipulate these tools to increase productivity. They want analytical thinkers who can direct the machine, spot its subtle biases, and correct its logical flaws. They do not want passive consumers who accept an algorithm's first output as gospel truth.

Some school leadership teams, particularly in the independent sector where the pressure to demonstrate value for money is higher than ever, have interpreted this workplace demand as a mandate to throw generic AI chatbots at our pupils. We buy the bulk licences, we put the shiny new AI wrapper on the school portal, and we tell prospective parents that we are preparing their children for the future workplace. It is a brilliant, highly effective marketing strategy, but it is fundamentally terrible pedagogy. It is the educational equivalent of painting racing stripes on a tractor and hoping it wins a Grand Prix.

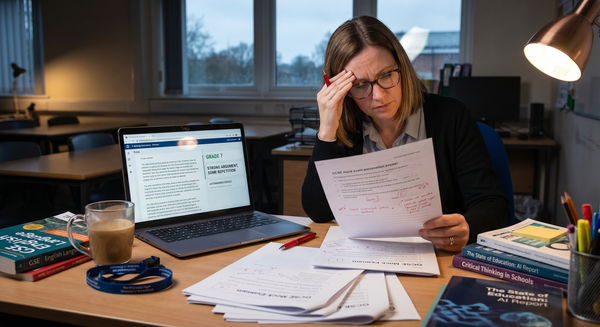

The newly released OECD Digital Education Outlook 2026 highlights a massive, ignored problem: giving pupils access to general-purpose AI without clear pedagogical intent creates what the report accurately terms "metacognitive laziness". It is a sobering read for anyone caught up in the EdTech hype cycle. The data shows that while these general-purpose tools boost short-term performance on specific tasks, that advantage vanishes in exam conditions when the AI is taken away.

The pupils are not learning the material; they are just offloading the heavy cognitive lifting to a server farm in Dublin. They are using the auto setting on the camera. They get the final essay, the completed worksheet, or the translated text, but they have bypassed the cognitive struggle required to actually embed that knowledge into their long-term memory.

As I wrote recently regarding Sweden's national tech reset, we cannot keep treating proximity to a screen as proximity to learning. We have to demand intentionality. In the independent sector, especially with the 20% VAT premium squeezing parental budgets, there is a distinct temptation to use AI access as a unique selling point. We want to show that our fees buy cutting-edge technology. But we need to be brutally honest with ourselves and our boards: access alone is cheap. Anyone can pay £20 a month for a premium chatbot at home. If our entire digital strategy revolves around granting access to tools that parents can buy themselves, we are not providing any tangible educational value.

Earlier this year, in The Illusion of Perfection, I argued that if the machine does the easy work and occasionally hallucinates the hard stuff, schools must double down on teaching resilience and the messy process of failure. Giving pupils a generic chatbot robs them of that friction. It gives them the illusion of competence.

We now need to take that educational philosophy and turn it into a hard, non-negotiable procurement rule for the IT department.

IT Directors must stop acting as passive order-takers. Sit down with the academic deputies and the heads of department. Ask why a tool is actually being requested. If someone wants an AI to help pupils write essays faster, we should be empowered to refuse the purchase order and explain why. Faster output does not equal better learning. Efficiency is a metric for factories, not for human minds working through complex historical concepts. We need to challenge teaching staff to articulate how a tool will introduce desirable difficulty into a task, not strip it away.

If a piece of software removes the need for a pupil to think, the IT department should not buy it. That is the rule.

We must demand AI integration that acts as an intelligent tutor rather than a crutch. This means forcing pupils to evaluate, question, and refine the outputs the machine gives them. Consider a pupil writing a geography report. A generic chatbot will cheerfully churn out three thousand words on coastal erosion in the time it takes the pupil to find their pencil case. An intelligently designed, pedagogically focused AI tool, the kind of intentional technology we must insist upon, would instead ask the pupil to outline their argument first. It would then review the outline, point out a missing piece of context regarding coastal erosion, and ask the pupil how they plan to address it. It forces the pupil to stay in the manual mode. It forces them to adjust the aperture and the shutter speed themselves, rather than just hitting the button.

We need to stop buying generic, off-the-shelf wrappers and start demanding intelligent tutoring systems that push back against the pupil. The software should question their logic and nudge them towards the right answer without ever just handing it to them.

If we let our pupils coast on autopilot, allowing the machine to do the framing and set the focus, we fail to prepare them for the complex, demanding workforce the government is currently investigating. Employers do not need people who can ask a machine to write a report; they need people who can read the machine's report and immediately spot the structural flaws. We need to dictate the terms of this technology, rather than letting the technology dictate the terms of our teaching.

By enforcing this procurement rule, the IT department becomes a genuine strategic partner in the academic life of the school. We stop wasting budget on marketing gimmicks and ensure that our schools remain places where the hard, frustrating, and ultimately rewarding work of human thinking actually happens. We have to guarantee that when the digital crutch is inevitably removed in an exam hall or a future boardroom, our pupils are the ones who still know how to stand on their own two feet.

See you in the digital staffroom.