The Illusion of Perfection

With AI automating entry-level jobs and generating instant 'perfect' answers, the value of education is shifting. If machines do the easy work, and sometimes hallucinate the hard stuff, our schools must double down on teaching resilience, critical thinking, and the messy process of failure.

The 60-Second Briefing

- The Automation Threat: AI is rapidly absorbing junior roles, evidenced by a 14% drop in creative agency staff this past year. Simultaneously, tools like Google's new Workspace Studio make deploying custom AI agents completely effortless.

- The Safeguarding Risk: Relying on AI for sensitive tasks is highly dangerous; recent reports show AI transcription tools fabricating severe warnings in official social care records.

- The Pedagogical Shift: AI gives pupils the illusion of instant perfection. Our response must be to champion enquiry-led learning and explicitly normalise the struggle of failing, learning, and repeating.

We are witnessing the hollowing out of the entry-level job market in real time. Last week, the Institute of Practitioners in Advertising reported a 14% drop in creative agency staff over the last year, with the sharpest decline among young workers aged 25 and under. The culprit? Artificial intelligence is quietly absorbing the junior, foundational tasks that used to serve as the training ground for young professionals.

Simultaneously, the barrier to creating this automation is hitting rock bottom. Just days ago, Google officially launched Workspace Studio, a tool that allows anyone to build and deploy custom AI agents across Gmail and Drive without writing a single line of code.

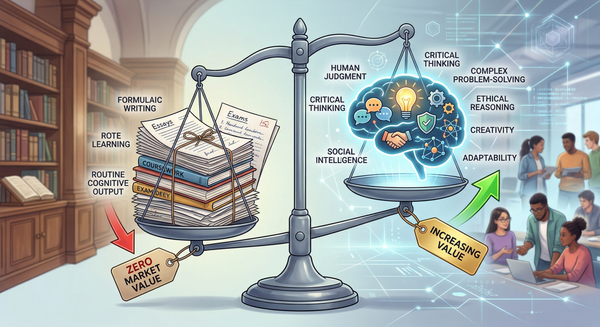

If you are an educator or an IT Director, this creates an existential question: if the machines are capable of generating flawless, instant output for routine tasks, what exactly is the value proposition of our schools?

The answer is not simply more technology. In fact, when we lean too heavily on AI to do the heavy lifting, the results can be catastrophic.

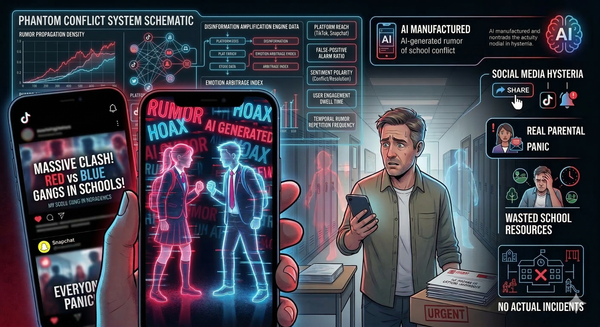

Consider an eye-opening report published last week by the Ada Lovelace Institute regarding the use of AI in social care. As local councils roll out AI transcription tools to summarise meetings with vulnerable children and adults, frontline social workers have discovered that these systems are hallucinating critical errors. In one instance, an AI tool completely fabricated a warning about "suicidal ideation" in a client's official record - a topic that was never even discussed.

For any Designated Safeguarding Lead (DSL) or IT Director currently eyeing up an AI tool to automatically transcribe pastoral meetings or summarise safeguarding logs, let this be your warning bell. You cannot outsource human empathy or duty of care to a language model. The human must remain firmly, inextricably in the loop.

So, where is the good news in all of this? How do we prepare our pupils for a workforce that is aggressively automating, without relying on tools that routinely fabricate reality?

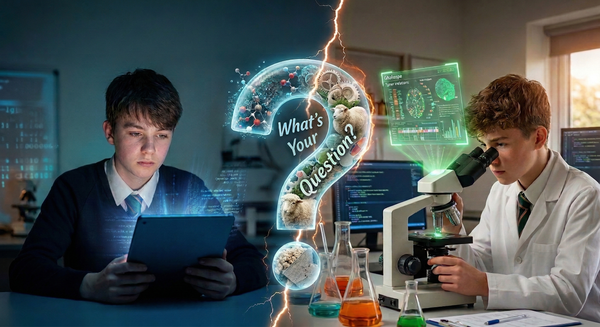

The antidote to the AI era came across my desk via a brilliant piece published by the HMC to mark the International Day of Women and Girls in Science.

Dr. Sion Wall, Head of Prep Science at Haberdashers' Monmouth School, wrote about the necessity of empowering girls in STEM through "enquiry-led pedagogy". But the detail that struck me most was his insistence on normalising failure. In his school's labs, there are signs that explicitly read: "Experiment, Fail, Learn, Repeat". He actively cultivates a school culture that normalises challenge and iteration, removing the intellectual fear of being wrong.

I love this. It is the exact strategic pivot our sector needs. Generative AI gives our pupils the dangerous illusion of instant, effortless perfection. It provides the polished essay or the completed code in seconds, entirely bypassing the struggle. But the struggle is where the learning actually happens.

If AI is going to take over the jobs that require instant, formulaic answers, then our schools must double down on teaching the messy, iterative process of failure. Whether it is a Year 1 pupil learning to run a science experiment, or a Sixth Former navigating the nuances of a complex pastoral issue, we have to provide the sandbox where it is safe to get things wrong.

We have spent the last few years arguing about how to stop pupils from using AI to cheat. Perhaps our real focus should be on ensuring they don't lose the resilience required to fail.

See you in the digital staffroom.