Repricing Human Judgement: The End of Traditional Assessment

As the parliamentary inquiry confirms AI is deeply embedded in schools, we must stop playing algorithmic catch-up. Adopting HEPI's 'FUTURES' framework lets us shift from assessing rote production to fundamentally repricing human judgement in education.

The 60-second Briefing

- The Westminster View: On 26 February 2026, the cross-party Education Select Committee launched a major inquiry to examine how AI is reshaping learning, critical thinking, and traditional assessment methods.

- The Classroom Reality: Recent survey data shows that 60% of teachers now use AI for professional purposes, effectively rendering traditional, asynchronous coursework obsolete.

- Human Competency: On 5 March 2026, the Higher Education Policy Institute (HEPI) published the 'FUTURES' framework, advising institutions to put human competency at the centre of AI integration.

- Strategic Action: Schools must embrace the "repricing of human judgement", moving away from assessing text production towards verifiable capabilities like social intelligence and ethical reasoning.

It feels like every single week comes with a new government initiative promising to figure out exactly what we should be doing with artificial intelligence. One of the latest developments is the cross-party Education Select Committee inquiry, which aims to scrutinise how AI is affecting teacher workloads, critical thinking, and, significantly, traditional assessment methods. The inquiry is quite expansive, looking into the risk of AI perpetuating digital inequalities between pupils and examining children's digital rights, specifically the delicate balance between their right to privacy and their right to participation.

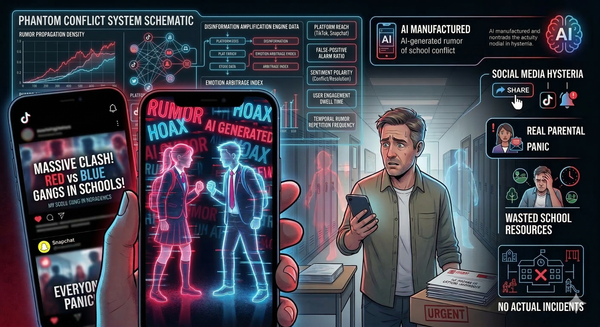

The parliamentary attention is welcome, but the reality on the ground, as the inquiry itself rightly notes, is that 60 per cent of teachers are already using AI for work, with over a fifth relying on it daily. The launch of this inquiry feels less like a proactive investigation and much more like a desperate attempt to map a landscape that has already been fundamentally terraformed. The technology has not politely waited in the corridor for a white paper to be published; it is already deeply embedded in our classrooms, our staffrooms, and our pupils' pockets. We simply do not have the luxury of waiting for Westminster to provide us with a neat set of instructions.

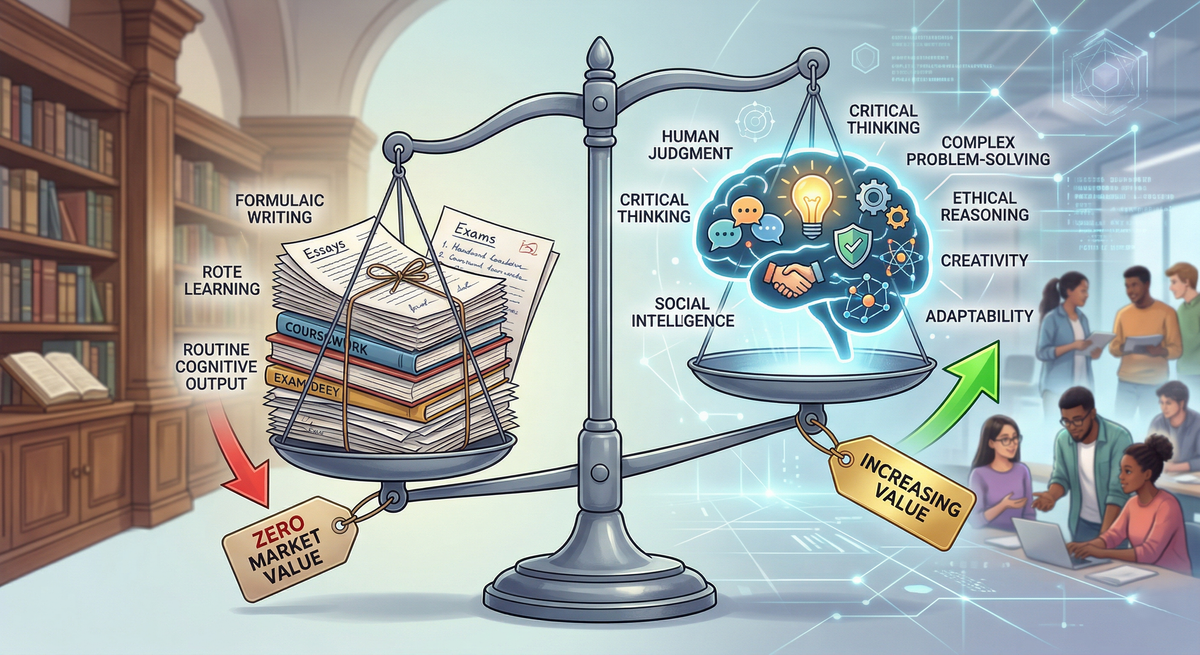

The true crisis that this inquiry must eventually confront is not whether AI should be used, but how its omnipresence is pretty much invalidating the established methods of educational assessment. For decades, the traditional model has relied almost exclusively on an asynchronous assessment of cognitive output. The essay, the coursework portfolio, and the take-home assignment: these were the unchallenged gold standards for measuring a pupil's grasp of a complex subject. Like it or not, generative AI has permanently and fatally compromised the integrity of these formats. As I argued recently in The Illusion of Perfection, when a freely available language model can instantly generate a cohesive, highly structured, flawless essay on any given topic, the mere ability to produce such a document ceases entirely to be a reliable proxy for human intelligence or deep understanding.

This reality forces the education sector to confront a concept currently gaining traction in corporate strategy and investment circles: the fundamental repricing of human judgement. In a world where routine cognitive work and formulaic writing are commoditised by algorithms, the market value of those specific skills effectively drops to zero. As a result, the value of uniquely human capabilities – critical thinking, the ability to navigate moral ambiguity, ethical reasoning, and complex problem-solving – skyrockets.

Thankfully, the higher education sector has just handed us a blueprint for this transition, and I genuinely believe it is essential reading for any school leader looking past the end of this current term. In early March, the Higher Education Policy Institute (HEPI) released a new report titled Being indispensable: Capabilities for a human-AI world. Buried within this document is the 'FUTURES' framework, a brilliant, pragmatic model that argues we need to aggressively direct our educational focus towards the human competencies that algorithms simply cannot replicate.

For the past couple of years, the educational narrative surrounding AI has been exhaustingly defensive. If you step into any IT Director's office, you will likely hear the same frustrations. We have been asked to obsess over plagiarism checkers, worry endlessly about academic integrity, and try to build even taller digital firewalls to keep the chatbots out of the coursework. It is a game of digital whack-a-mole that we are statistically destined to lose.

The FUTURES framework politely but firmly suggests that we are fighting the wrong war entirely. Instead of trying to assess routine cognitive output, we need to be assessing entirely different capabilities. The framework spans seven core domains:

- Fluency in AI and Digital Systems

- Understanding Self and Wellbeing

- Technology Ethics and Responsibility

- Understanding others and Social Intelligence

- Resilience and Adaptability

- Emerging Technology Awareness

- Society and Professional Engagement

Notice what is missing from that list? The ability to rote-learn and regurgitate a standard five-paragraph essay.

If a sixth former can use a generative tool to perfectly synthesise the historical causes of a conflict, the assessment should no longer be about whether they can write it down in a structured format. That specific skill has effectively been commoditised. The assessment must evolve into an oral defence of the biases inherent in the AI's output or a collaborative, real-time negotiation based on those historical facts. It is a major shift from assessing rote production to assessing verifiable, in-person human judgement.

This is not just an abstract philosophical debate aimed exclusively at university vice-chancellors. It directly and urgently impacts how we prepare our pupils in the independent sector right now. Our parents are paying premium fees, and in the current economic climate – especially following the Court of Appeal's recent decision to uphold the application of VAT on school fees – they are scrutinising our value proposition more than ever. We cannot justify those fees by teaching pupils how to do the very things that software will do for free by the time they graduate. Integrating AI effectively means ensuring that our pupils remain genuinely indispensable in a labour market that is actively commoditising basic cognitive tasks. The HEPI domains, particularly technology ethics and social intelligence, are the exact areas we need to aggressively weave into our pastoral and academic curricula.

Education Secretary Bridget Phillipson recently remarked that AI could provide the "biggest boost for education in the last 500 years". But that boost will not come from simply digitising our old habits. It will come from having the courage to look at a piece of coursework and admit that if a machine can do it instantly, we probably should not be grading a human on it anymore. Let us use the momentum generated by these national discussions to finally dismantle legacy assessments that have outlived their usefulness and start building a curriculum that values the human mind for the brilliant, messy, and creative things the machine still cannot do.

See you in the digital staffroom.