Caught in the Crossfire: AI Innovation vs. Safeguarding Reality

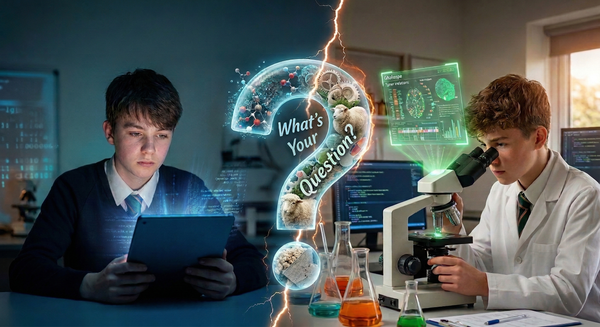

While schools are writing policies about academic honesty and parents are worrying about screen time, pupils have simply moved on, seamlessly integrating AI into their lives. Safety and learning happen in the messy middle where, instead of pretending we can block AI, we focus on proper governance.

The 60-Second Briefing

- The Paradox: We are watching the government pour £23m into AI testbeds while simultaneously updating KCSIE 2026 to classify AI deepfakes as a severe form of child-on-child abuse.

- The Pivot: Banning physical devices is easy, but blocking AI software is a fool's errand. We must shift our institutional strategy from blocking AI to actively governing it.

- The Blindspot: The sector's obsession with pupil "AI literacy" is distracting us from a more immediate risk: staff must understand that safe AI use is now a statutory safeguarding duty, not just an IT policy.

- The Win: The new KCSIE draft finally mandates a strict annual review of our filtering and monitoring systems, giving us the leverage to turn a casual check-in into a non-negotiable, properly budgeted health check of our digital defences.

It was half-term last week, which finally gave me a moment to catch my breath, step away from the daily operational fires, and process the absolute whiplash of the recent news cycle. Safer Internet Day 2026 brought with it the highly appropriate theme of "Smart tech, safe choices," but navigating the government announcements surrounding it felt like standing in the middle of a digital tug-of-war.

A couple of weeks ago, I wrote about drawing a hard boundary around technologies that distract while intentionally building bridges to tools that deepen pupil understanding. So I fully support Education Secretary Bridget Phillipson's recent letter instructing headteachers to "remove ambiguity" and enforce strict mobile phone bans. We should evict personal distractions from the classroom to protect our pupils' focus.

But dealing with the physical devices in their pockets is actually the easy part. The real challenge we face is governing the software running on the infrastructure we provide.

We are currently getting funded to build world-class digital highways through the Department for Education's "Connect the Classroom" WiFi upgrades. The government is aggressively pushing us to embrace the future on these networks. Just recently, ministers announced a £23 million expansion of the EdTech testbeds pilot programme to evaluate how new tools perform in live classrooms. They have also launched a tender, backed by £1.5 million, to co-create AI marking and tutoring tools with teachers.

I wrote last week about how easy it is to be cynical about government IT projects and legislation. Politicians and tech executives get to stand on stages and paint utopian visions of closing the attainment gap and reducing teacher workload with personalised AI tutors. They get to fund the exciting, shiny front end of the technological revolution. But down in the trenches, we are the ones who have to deal with the fallout.

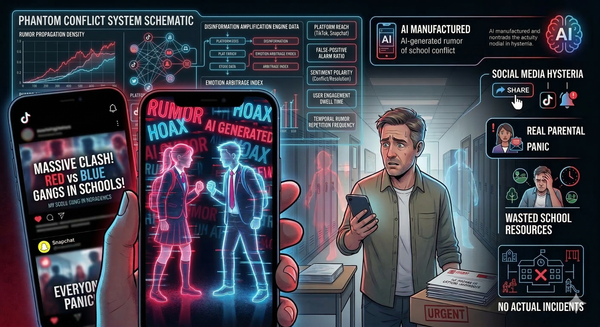

The newly launched consultation for Keeping Children Safe in Education (KCSIE) 2026 brings this stark reality into focus. For the first time, this draft guidance explicitly categorises AI-generated deepfakes and the sharing of synthetic intimate images as a severe form of child-on-child abuse. This perfectly encapsulates the innovation versus protection paradox. The government gets to promote the limitless potential of artificial intelligence, while IT Directors are the ones configuring web filters to block the worrying rise of "undressing" apps. We have to enforce the protection while everyone else celebrates the innovation.

This is why our strategy must evolve. Banning smartphones is the correct move for pupil wellbeing, but it does not remove AI from their lives. The technology is already baked into the browsers and search engines, even on their school-issued laptops.

The research released by the UK Safer Internet Centre and Nominet dropped some statistics last week that should completely reframe your next SLT meeting. A staggering 97 percent of young people aged eight to seventeen are now using AI tools. They are using them to save time, to get advice, and yes, to do their homework. And guess what: only 31 percent of parents actually believe their children are using AI to help with schoolwork. Furthermore, 60 percent of teens in that same report are actively worried about AI being used to create inappropriate pictures of them.

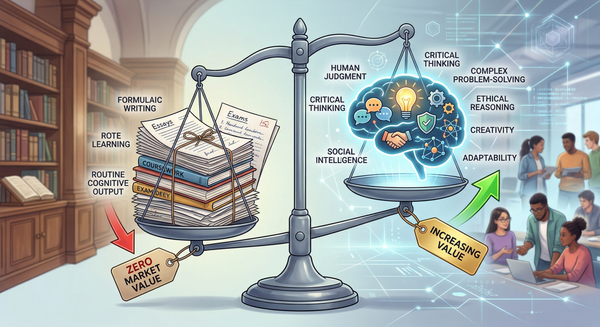

Welcome to the Governance Gap. While schools are busy writing policies about academic honesty and parents are worrying about general screen time, the pupils have simply moved on. They have integrated artificial intelligence into their lives as seamlessly as they did Google Search.

If we continue to treat AI solely as a cheating tool or a threat to be blocked at the firewall, we drive this usage underground. We create a shadow curriculum where pupils learn to use AI to bypass our rules, rather than using it safely. Because of this paradox, it is time for a fundamental strategic pivot. We must move from a mindset of "blocking AI" to a framework of "governing AI." Governing AI means accepting its presence on our networks and building the scaffolding - the policies, the auditing, the cultural expectations - to ensure it is used transparently.

This brings me to a critical piece of feedback for our sector. Right now, there is a massive obsession with teaching "AI literacy" to pupils. It is a noble goal, but it entirely misses the immediate institutional vulnerability. We are eagerly trying to teach pupils how to prompt, while many of our own staff still haven't grasped that safe technology use is no longer just an acceptable use policy gathering dust on the staff intranet. It is a statutory safeguarding requirement.

The KCSIE 2026 consultation proposes adding specific paragraphs on the use of generative AI directly into Part Two, which covers the management of safeguarding. This means AI is a safeguarding duty that every member of staff must actively manage. Until our teachers and leaders internalise that AI safety is their responsibility too, all the pupil literacy programmes in the world will not protect us.

But amidst the chaos, there is genuinely good news regarding how the DfE is approaching our infrastructure. There is a massive win hidden in the KCSIE 2026 draft consultation that gives IT Directors the leverage we have been fighting to secure for years.

The requirement for reviewing filtering and monitoring systems has been significantly sharpened. Previously, we were told to review them "regularly," which was an ambiguous guideline that often saw these critical audits slip down the priority list as the term got busy. The new draft explicitly demands that governing bodies review filtering and monitoring provisions at least annually. This changes the conversation from a casual check-in to a mandated, budgeted annual health check of our digital defences. It moves filtering from being just a tech job to a non-negotiable governance standard.

There is more good news to be found. Alongside the continually updated digital and technology standards, the DfE has announced it is exploring a move to an HTML-based format for KCSIE, stepping away from the traditional, static PDF. It might sound like a mundane administrative tweak, but it represents something much larger. It signals a long-overdue culture shift. The government is finally treating school IT infrastructure, policy, and compliance as a serious, dynamic professional utility. For too long, school IT was treated as an afterthought to the real business of teaching. This move shows a recognition that our digital estate is the very foundation upon which modern education and safeguarding rest.

We can have the fastest WiFi in the world and the strictest phone bans in the country, but neither of those things actually keeps children safe or helps them learn on their own. As I noted when discussing intentional technology, we must carefully curate the digital environment. Safety and learning happen in the messy middle. They happen when we admit that our kids are using AI, and instead of pretending we can block it all, we build the frameworks to govern it.

The infrastructure that matters most in 2026 is not the cabling in the ceiling; it is the governance in the boardroom.

See you in the digital staffroom.